So you want to start A/B testing your site. OK, great! But where do you begin?

Most experts (including us) will advise you to look to best practices and go after “low-hanging fruit” first. That’s great advice, but you’ll soon find out that some best practices are … better than others. And, not all low-hanging fruit is worth picking.

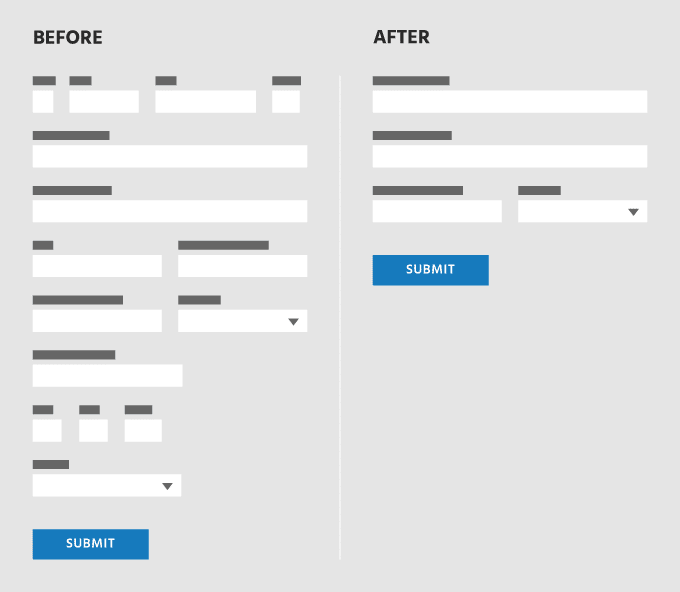

We implement tests on a number of complicated sites — sites with long, time-consuming forms that ask for all manner of personal information. Best practices (and general neighborliness) inform us that it’s best to avoid asking for unnecessary information on forms. Anything that makes onboarding more laborious will surely hurt conversions, and asking for personal information can be especially off-putting for privacy-conscious visitors.

With one of our clients, we conceived of an experiment to push some of these fields further down into the funnel, where visitors are more committed. This was no trivial test implementation. It required a form redesign on the frontend, modifications to the on-page validation, plus an alternate method of handling submissions on the backend. But surely it would be worthwhile once we saw those conversions skyrocket! Right?

Not this time. After running the test for two weeks, with thousands of visitors per variation, the results were flat. Asking for less personal information up front didn’t lead to an increase in conversions to the next step in the funnel, or any of the subsequent steps for that matter.

…So surely we all mourned the wasted time and energy put into this experiment, took turns blaming each other, and cried into our laptops. Right?

Not at all. In fact, this was an extremely informative test. We learned that visitors to this particular site do not mind parting with personal information. They are motivated and informed, and have generally done their homework before they start onboarding. They understand why the information is being requested, and they trust the site. We could never have safely assumed this, but with data to back it up we are able to say it with confidence. (Of course, we’ll continue testing related hypotheses in other experiments, just to be sure…)

There are two lessons here.

First, best practices are always best in general terms, but they don’t always matter in particular cases. What matters are the specifics of your site’s visitors’ experience. That’s all.

Second, a test doesn’t have to “win” to be a win. The value of a test is in the learnings derived from it; if you launched a test confident that you had a win, only to get inconclusive results, congratulations! You just learned something!

So is your conversion rate suffering because of your cluttered navigation? Do people bounce because you have too many form fields? You don’t know until you test it. Stop feeling bad about your site and get to know your visitors through experimentation.