Last year, we stepped back and asked a deceptively simple question: What actually changes when web optimization teams get better at picking which tests to run?

Not when they crank up velocity. Not when they unlock more traffic. Just when they make better calls about what deserves a slot on the roadmap.

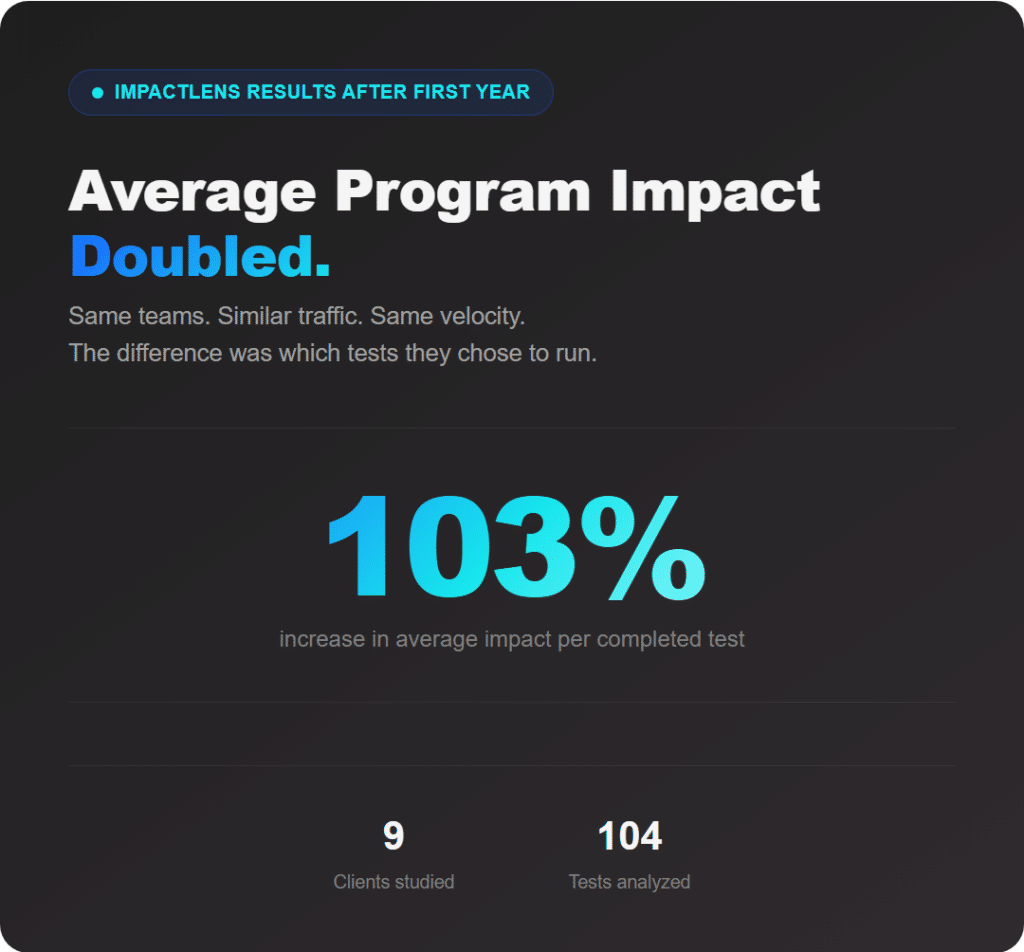

To find out, we looked at nine long-tenured Cro Metrics clients who adopted ImpactLens and compared their performance before and after they started using it consistently.

Across those nine clients, average program impact increased by 103%. Same teams, similar traffic, roughly the same velocity. The main difference was which tests they chose to run.

That result is the headline. But the story of how it happened, and what we learned along the way about our own model, is worth telling in full.

Every Program Has Constraints. The Real Question is How You Choose Within Them.

No web optimization team operates in a vacuum. Some are limited by traffic. Others by engineering time, budget, roadmap dependencies, or stakeholder politics. Most deal with a shifting mix of all of the above, and it rarely stays stable for long.

Once those constraints show up, the game changes. The question stops being “How do we run more tests?” and starts becoming “Given our limits, which bets are actually worth placing?”

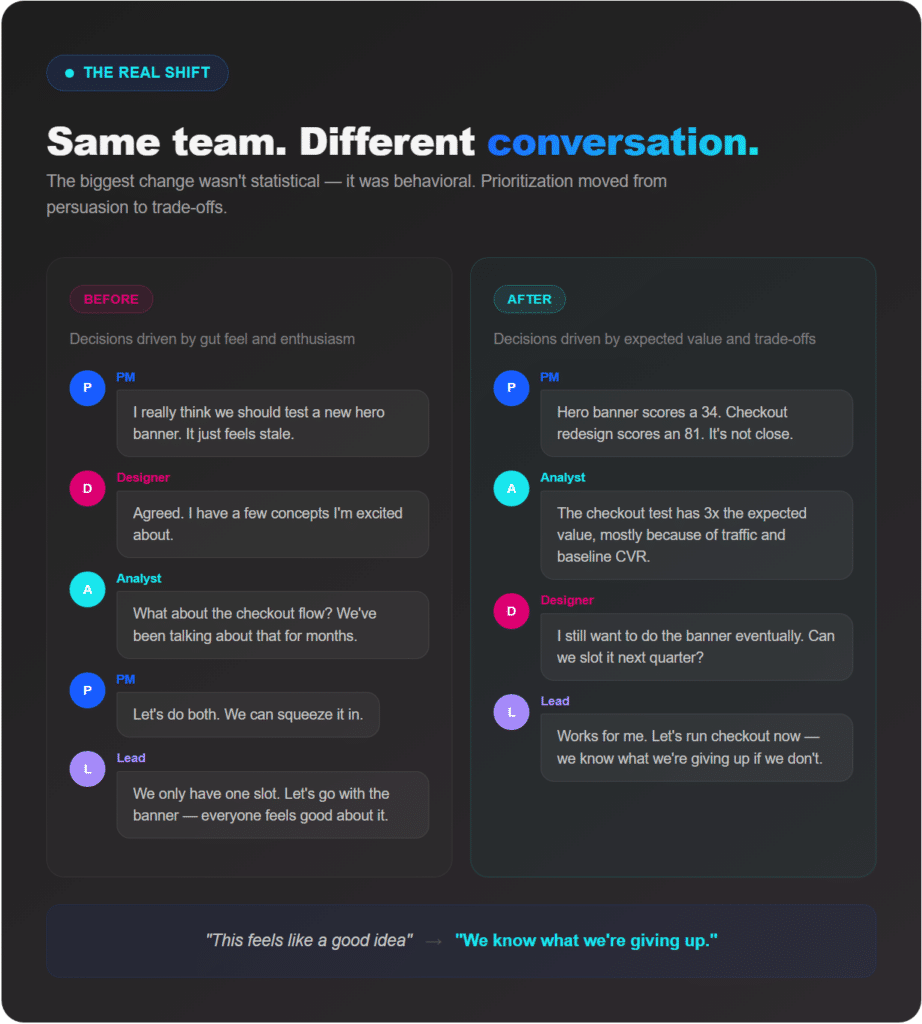

ImpactLens didn’t remove any of those constraints. What it did was help teams see their trade-offs more clearly, and make decisions they could actually defend. That alone changed how prioritization conversations unfolded.

What ImpactLens Actually Evaluates

At its core, ImpactLens is a test prioritization engine powered by predictive modeling. Knowing whether a test is likely to win matters, of course, but it’s not enough on its own.

Think about it this way: a test that wins but runs in a low-impact area of the site may barely move the business. On the other hand, a test with massive upside but only a 5% chance of success isn’t necessarily a smart bet either. You need both sides of the picture.

ImpactLens evaluates two variables together:

Win Probability. How likely the idea is to succeed, based on patterns in historical experimentation data.

Impact Potential. If it does win, how much would it actually move the business? This factors in where the test runs, traffic levels, baseline conversion rate, value per conversion, and average lift.

Those two inputs get combined into a probability-weighted impact forecast, essentially an expected value calculation for each proposed test.

That reframing changes the conversation entirely. Instead of asking “Is this a good idea?”, teams start asking “Is this the highest expected-value use of our time, budget, and political capital right now?”

It’s a subtle shift, but it changes behavior in ways that compound over time.

How We Measured the Change

We ran a longitudinal comparison across nine clients who had at least 12 months of program history before adopting ImpactLens, used it consistently in their prioritization process, and maintained consistent year-over-year traffic and testing velocity.

Our primary metric was average impact per completed test. We compared the trailing 12-month average before adoption with 6–12 months after, measuring impact based on actual observed lift against each client’s primary KPI, whether that was revenue, leads, or another core metric.

We included every completed test: wins, losses, and inconclusive results. No cherry-picking.

Across the nine clients, average impact per test increased by 103%. That’s the number that matters most, because it reflects what actually happened in the real world. Teams made better choices, and those choices produced meaningfully better outcomes.

How Accurate Were the Forecasts?

Here’s where we want to be candid.

Across 104 completed tests, each had a probability-weighted impact estimate. We compared predicted impact with actual realized impact per client, then averaged accuracy across all nine.

Forecast accuracy came in at 50%.

That’s not where we want it to be, and we’re not going to frame it as something it isn’t. Predicting the precise impact of an experiment is genuinely hard, and our model has real room to improve on that front.

But here’s what makes the overall picture more nuanced: even at 50% forecast accuracy, the programs that used ImpactLens still doubled their impact per test. That tells us something important about where the value is actually coming from.

The model doesn’t need to be a perfect oracle to be useful. What it does well, even now, is separate high-potential tests from low-potential ones. It pushes weaker ideas down the list and surfaces stronger ones. The relative ranking was doing meaningful work even when the absolute predictions were off.

In other words, the model was directionally useful enough to change which tests got prioritized, and those prioritization changes drove real results. That’s a foundation we can build on.

What Actually Changed Inside These Programs

The biggest shift wasn’t statistical. It was behavioral.

Teams started killing low-potential ideas earlier. Prioritization debates moved away from persuasion and toward trade-offs. Conversations became less about who felt strongly and more about what the expected value actually said.

That doesn’t mean they blindly followed the model. Sometimes a lower-scoring test still ran for perfectly good strategic reasons: launching a new product line, validating a leadership hypothesis, exploring a new initiative, or even just because the team had strong collective conviction about an idea.

The difference was awareness. Before ImpactLens, the reasoning often boiled down to “This feels like a good idea.” After, it sounded more like “We know what we’re giving up by choosing this over that.”

That’s the line that separates busy programs from effective ones. And notably, that behavioral shift delivered a 103% improvement in impact even while the underlying forecast model still has significant room to grow. We think that says something about the power of structured prioritization on its own.

Why This Matters

Testing program maturity isn’t about endlessly increasing velocity. At some point, every program hits real constraints: engineering capacity, budget ceilings, stakeholder friction, limited political capital. When that happens, leverage shifts from speed to selection.

If every test slot represents scarce resources, the question that matters most is whether those slots are going to the highest-impact opportunities. For these nine clients, improving that allocation doubled the average return per test.

That’s not magic or hype. It’s better decision-making. And over time, better bets compound.

What’s Next

The 103% impact improvement tells us the approach works. The 50% forecast accuracy tells us the model has a long way to go. We’re treating both of those signals seriously.

We’ve now onboarded 22 clients in total, which means the dataset behind these forecasts keeps getting richer. More programs, more variation, more real-world outcomes to learn from.

We’re also retraining the predictive model with more advanced tagging and LLM-based classification to better capture patterns across experiments, and we’re reevaluating the scoring algorithm with a specific focus on closing the gap between predicted and actual impact.

The goal is a model that’s not just directionally right, but meaningfully precise. We’re not there yet, and we’d rather be honest about that than oversell where we are.

If you’re running an experimentation program and feeling the pressure of real constraints, this is the problem we’re focused on: helping teams make sharper bets with the slots they have. Even in its current form, that framework doubled results for nine clients. As the model improves, we expect the compounding to continue.

If that kind of leverage sounds like what your program needs, let’s talk.

Ready to turn ambitious growth goals into deeper customer connections and measurable business impact?

Reach Out Today